I facilitated my first Agile retrospective today, going over a six month project with 12 people, mostly from Elcom's development team, along with one or two others from the systems admin, project management and implementation teams. We followed the format suggested by the Retrospective Goddesses in their Agile Retrospectives book. It was an interesting and rewarding experience, so I thought I'd document it here.

Alan Lee, Rajesh Stephen and Chae Wan Kim do some group work.

This project had been a large and challenging one for us, whilst still a success for the ultimate client. It had sucked in most of the development team at one point and I was one of the few people not directly involved. When our management decided to run the retrospective they had envisaged nothing more than a brainstorming session and opportunity to vent some emotions.

We had purchased the Agile Retrospectives book some months ago and I suggested that our Technical Director run it using the book - except it had somehow gotten stuck in my bookshelf at home. Whilst bringing it in I flicked through it and noticed that the main facilitator role sounded exactly like the facilitator role I've been fulfilling in the Valiant Man courses I've been involved in this year. So I volunteered myself as the facilitator, negotiated to increase the time from one to two hours and promptly got stuck with all the preparation involved!

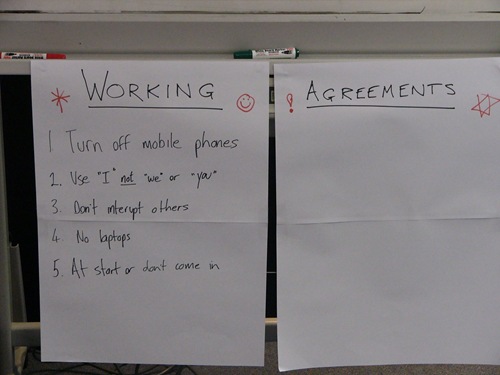

Working Agreement

As the person with the most experience of this sort of group work I suggested most of the agreements, but the group did come up with "Don't interrupt others" on their own. Going "topless" was particularly hard for the managers in the group - but I think it really helped them engage with the process.

Goal

This was an interesting one, I had selected a reasonably neutral, positive (and useful) goal by focusing on one of the unusual characteristics of the project, our work with a partner organisation. But the team thought that added to this should be a focus on big projects as this was another defining characteristic of this particular project.

I then got everyone to give us a quick one or two word description of their feeling or state at the end of this project. This was interesting as we got flat out positive and negative responses as well as mixed ones like, "stressed, happy." The range of emotions described was a reminder that not everyone sees things the same way.

Information Gathering

I chose the timeline method to draw out details from the group as that would allow us to vary the amount of time we spent on it, and for a six month long project would help people remember their experiences.

Tracey Schneider, Gavin Silver, Balaji Sridharan and Chae Wan Kim place their cards

The group broke out into three groups of 4 and worked on identifying important events in the project timeline, categorised into client (blue), Elcom (green), people/team (pink) and technical (yellow). I had previously prepared the whiteboard by separating it into months, and including the number of days of developer time used in each one.

We ended up with a very interesting overview of the project that included many events that people had forgotten, or not even known about, as well as some that everyone was affected by (we stacked duplicate cards on top of each other). I mostly stood back and watched (that's me below, closest to the camera), and occasionally stepped in to help people understand how to handle duplicates (stacking), confusions with long-running events (put multiple cards across months at same level) and disagreements about what was an event (pretty much anything could be).

Craig Bailey, Tracey Schneider and Gavin Silver check out the cards, watched by me.

Once each group had their cards up, I asked people to review the board, one group at a time, and discuss what others had identified, as well as looking for duplicates or events still missing. That only got us one or two extra events, but gave everyone a chance to see the total timeline up close.

I then told each participant that they had five positive (green) dots and five negative (red) dots to apply to cards on the timeline - but they could only put one dot on each card. They came up in pairs to dot the cards. I wanted to use colour coded dots, but we made do with red and green pens. You can see the result below:

People wanted to know how to identify what was positive, or negative, but I pointed out it wasn't supposed to be scientific, just a subjective review of their feelings about the events listed. That worried people less than I thought it might.

Once they had all finished I took over and went through the events that seemed the most interesting (lots of green dots, red dots, or a mix). I also pointed out events that were usually regarded as important, but that ended up having little significance to the team.

Some of the event cards turned out to be confusing, so I also queried what they meant and made sure I added text where necessary to help clarify their meaning. One of the events that had a real mix of dots, turned out to be interpreted two completely different ways, so we drew a line between the dots and added text to each side to explain further.

Identify Experiments and Actions

Unfortunately time was short for this retrospective, so I had planned a quick 5 Whys activity to help the team quickly find useful insights. They broke up into small groups/pairs (two groups of 3 and three pairs) and started asking why.

5 Whys has one participant ask the others in their group why an event was important, positive or negative. It was left pretty open for them to choose their favourite item from the timeline. Once they got the answer, then then asked why that was important/relevant. They would do this a total of 5 times, and only record the answers to the fourth and fifth questions. Every member of each group got a chance to do this at least once and we got some funny and insightful responses as a result (one one of the cards below the fifth answer was that the person was "speechless", so they drew an empty word bubble!).

Next was the only awkward part of the retrospective (from my perspective). I had planned for people to do a vote on each item with one stroke per card, as many strokes as they wanted (hat-tip to ThoughtWorkers Lachlan Heasman/Jason Yip for that one!), but the criteria I wanted to use was "which item would you most want to work on". Indeed this is what I announced to the group, but I noticed when people actually came up to vote that the question/answer cards did not identify the experiment/action that could be taken to answer them.

We were over-time by this stage, so I made sure everyone knew what the problem was, but let them keep voting anyway. I then had to step in to organise the cards by stroke-votes (as shown above) and then run a quick discussion of what steps might be taken for each one.

Summary

I'm really proud of how enthusiastic the development team were in approaching this. I did a Return On Time Invested poll at the end of the event and on a rating of 0-4 (0=No return, 2=Break even, 4=High return) most of the guys thought it was a 4, some thought it was 3 and a quarter of the group rated it as a 2 (one of those turned out to misunderstand what we were measuring and later said she would have voted 4 too!).

We should be making this a regular part of our Agile process and I will certainly be agitating for it occur more often. I'm sure that having more retrospectives will help identify some of the more fundamental issues that affect the development team and get us continually improving.

How long were your sprints within the project? you might want to look at running shorter retros at the end of each sprint.

ReplyDeleteI tend to find that end of project retros become more of a venting session, and given that the horse has already bolted - few actions actually get carried into the next project.

By carrying out retros at the end of each sprint, you tend to get smaller (more actionable) outcomes that allow continuous improvement.

The team seem to focus more on the present eg "how can we fix this problem" rather than "what can we do to stop the same problems from happening in the next project"

I still think end of project retros are important, but our team definitely improves more during the project, rather than at its completion.

Tyson, that's a good point. I think larger projects like this, particularly ones that involve a lot of custom work, tend to not be managed well by our normal sprint processes.

ReplyDeleteHaving said that, hopefully retrospectives will seem more manageable and useful going forward and we will be doing them every sprint.

This is a very detailed write-up of the session Angus - thanks for sharing so much. It sounds like some important issues were identified.

ReplyDeleteYou certainly wouldn't be the first to have a retrospective run over time before getting actions out (especially when you are trying to cover 6 months worth of work - phew!) These days, I tend to create a retrospective plan that includes strict timeboxes for each activity and I usually find that I need to squeeze the time allowed on data gathering and generating insights somewhat to ensure that a good block of time is left for deciding on and volunteering for actions.

For actions, I look for them to be phrased so that they are sufficiently specific and measurable that we can clearly determine whether they were achieved when we get back together at the next retrospective. You might want to consider "SMART" actions to help achieve that.

Anyway, this is a really nice first retrospective story. Like you, I hope that it becomes a regular part of your Agile process so that you give yourself a mechanism to keep improving.